Your Agent Doesn't Need a Better Vector DB. It Needs Procedural Memory.

Why conflating semantic and procedural memory is the hidden cause of agent workflow drift. The two-layer architecture that actually works.

We spent six months watching our agents forget how to do their jobs.

Not forget facts — we are drowning in facts. Our vector store has 40,000+ embeddings from customer calls, runbooks, and Slack threads. The agents could retrieve any of it. What they could not do was execute a procedure correctly twice in a row.

The incident that broke our patience was routine: an agent needed to post a weekly summary to Linear, a workflow we had supposedly “taught” it months ago. It retrieved the memory, generated a summary that was semantically similar to what we had shown it before, and then executed the steps in an order that created the ticket before creating the project, which violated Linear’s API constraints. The task failed. The customer was confused. We had the memory. We just did not have the procedure.

The Category Error Everyone Makes

Cognitive psychology draws a sharp line between declarative memory — conscious recall of facts and events — and procedural memory — unconscious execution of learned skills. You can tell someone that you can ride a bike (declarative) while being completely unable to articulate how you stay balanced (procedural). The knowledge is represented differently, stored differently, recalled differently.

The AI community has spent two years building increasingly sophisticated declarative memory — bigger context windows, hierarchical embeddings, multi-modal vector stores. We have optimized retrieval for similarity while ignoring that similarity is the wrong metric for sequence.

When you store a procedure in a vector database, you are making a bet that semantic similarity will preserve operational correctness. That bet loses in three predictable ways:

- Step omission. A retrieved procedure is truncated or chunked poorly, dropping critical steps that were not “semantically salient” enough.

- Step reordering. Cosine similarity has no concept of before and after. Steps get reordered based on embedding proximity, not dependency chains.

- Near-miss substitution. The LLM confuses two similar procedures — “deploy to staging” and “deploy to production” — because their embeddings overlap.

We hit all three. Repeatedly. Our logs showed agents retrieving “customer onboarding” memories and executing them for employee offboarding because the embeddings were similar enough to pass the threshold. The vector DB was doing exactly what it was designed to do. The design was wrong for the job.

What We Actually Built

We did not abandon our vector store. We stopped pretending it could do procedural work. Today our architecture has two memory layers with explicit contracts.

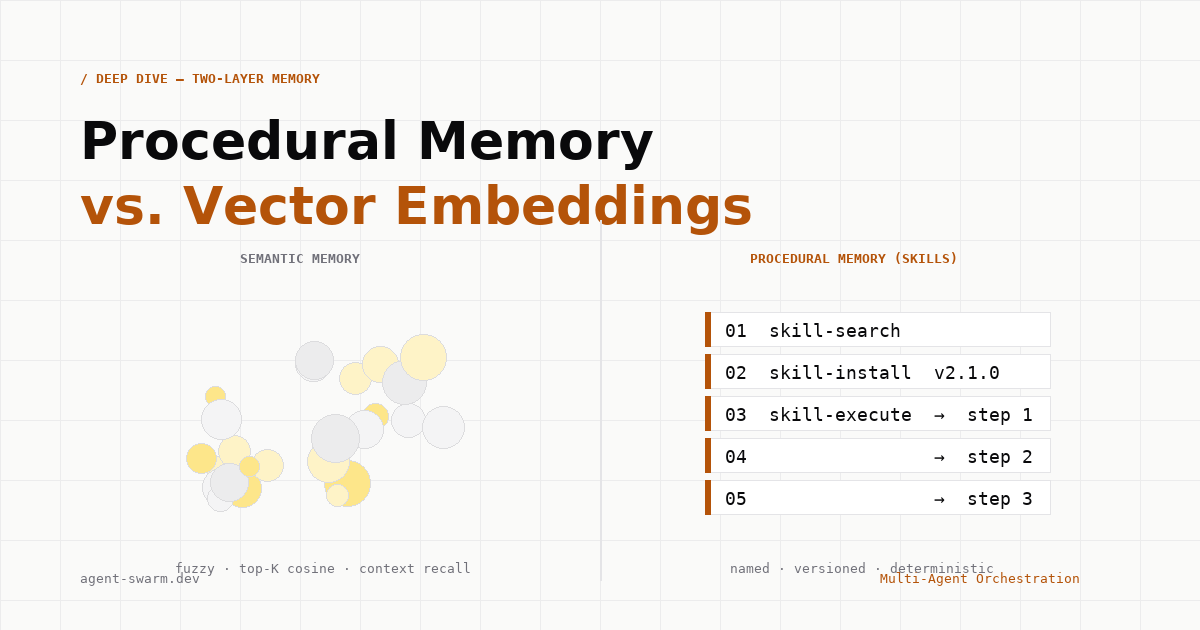

Semantic memory is for context recall, pattern matching, opportunistic retrieval. Implementation: SQLite plus 512-dim text-embedding-3-small with brute-force cosine. Contract: top-K matches with confidence scores, no execution guarantees, partial match is a feature.

Procedural memory (skills) is for deterministic execution of named, versioned procedures. Implementation: 12 dedicated tool primitives — skill-create, skill-install, skill-publish, skill-search, skill-execute, and friends — with explicit lifecycle. Contract: immutable procedures, explicit triggers, deterministic execution order, partial match is a bug.

The skill system lives alongside but never inside the vector store. Here is what the tool surface actually looks like:

// src/tools/skills/skill-create.ts

// Creates a new skill version from a validated procedure definition

interface SkillCreateInput {

name: string; // Globally unique identifier

version: string; // Semver, immutable after publish

trigger: TriggerConfig; // When to invoke this skill

procedure: ProcedureStep[]; // Ordered, validated steps

requiredPermissions: string[];

}

// Example: Linear weekly report skill

const linearWeeklyReport = {

name: "linear.weekly-summary",

version: "2.1.0",

trigger: {

type: "schedule",

cron: "0 9 * * MON",

intentMatch: /generate weekly linear report/,

},

procedure: [

{ step: 1, tool: "linear.project-create", required: true },

{ step: 2, tool: "linear.ticket-create", dependsOn: [1] },

{ step: 3, tool: "linear.comment-post", dependsOn: [2] },

],

};The critical insight: skill-search and skill-execute are different tools. Search returns metadata — name, version, trigger conditions — without execution capability. Execution only happens through skill-execute with explicit version pinning and dependency validation.

// src/tools/skills/skill-execute.ts

// Execution with strict validation of step order and dependencies

export async function skillExecute(

input: SkillExecuteInput,

context: AgentContext,

): Promise<SkillResult> {

// Load exact version, never "latest"

const skill = await skillRegistry.loadExactVersion(

input.name,

input.version, // Must be explicitly specified

);

// Validate all dependencies are satisfied

for (const step of skill.procedure) {

if (step.dependsOn) {

const depsSatisfied = step.dependsOn.every(

(d) => context.completedSteps.has(d),

);

if (!depsSatisfied) {

throw new DependencyError(

`Step ${step.step} requires unresolved dependencies: ${step.dependsOn}`,

);

}

}

}

// Execute in strict procedure order

return executeInOrder(skill.procedure, context);

}Why Skills Cannot Live in Vectors

The temptation is strong. Your vector DB is already there. Why not just store procedures with high-quality embeddings and retrieve them with clever prompting? Because the failure modes are structural, not implementation quality.

Consider two procedures with similar purposes but incompatible sequences:

// Procedure A: Customer Onboarding

1. Create Stripe customer

2. Send welcome email

3. Provision Slack workspace

4. Schedule kickoff call

// Procedure B: Employee Offboarding

1. Archive Slack workspace

2. Cancel Stripe subscriptions

3. Send exit survey

4. Schedule knowledge transfer

// Embedding similarity: 0.87 (high)

// Execution compatibility: 0 (these must NEVER be confused)In our experience, embedding-based retrieval could not reliably distinguish these. Both involve Stripe, Slack, email, and scheduling. The semantic payload — create vs archive, welcome vs exit — is linguistically subtle and structurally critical. Vector similarity treats “provision” and “archive” as related operations (both workspace management). Procedural correctness treats them as mutually exclusive branches.

We tried all the obvious fixes: better chunking, dependency annotations in the text, retrieval-augmented ranking. The fundamental problem persisted — the retrieval metric (cosine similarity) was optimizing for a different objective than the execution requirement (exact sequence match).

The Installation Contract

Skills are not globally available. They must be explicitly installed per agent, with version pinning that survives agent restarts and migrations. This is the skill-install tool:

// src/tools/skills/skill-install.ts

// Per-agent skill installation with version pinning

interface SkillInstallInput {

skillName: string;

version: string; // Pinned, not "latest"

agentId: string;

approvedBy: string; // Human or policy reference

installOptions: {

autoTrigger: boolean; // Can this skill self-trigger?

requireConfirmation: boolean; // Step through or auto-execute?

maxConcurrency: number; // Parallel execution limits

};

}

// Installation creates a binding record.

// The skill is now in this agent's procedural memory.

// Not retrievable via semantic search — only via skill-list.This separation is load-bearing. An agent with customer-management skills installed cannot accidentally retrieve offboarding procedures from semantic memory because the offboarding skill was never installed. The agent’s procedural memory is a closed set, explicitly managed. Its semantic memory remains open, opportunistic, fuzzy — appropriate for context, catastrophic for procedure.

Real Failure: The Database Migration Incident

Our most painful validation came during a production database migration. We had a procedure, stored in our vector DB with extensive documentation, for “Migrate customer data between PostgreSQL instances.” The embedding captured the intent perfectly.

When an agent needed to run this during an incident, it retrieved the memory and generated a plan. The plan was semantically coherent — backup, migrate, verify, cutover. But the specific steps it generated differed from our tested procedure in critical ways: it omitted the logical replication pause, reordered the verification steps, and substituted a similar but incompatible connection string for the new instance.

The migration completed. Data was lost — not much, but enough. The post-mortem revealed that no single component failed. The vector retrieval returned relevant-looking content. The LLM generated plausible-looking steps. The execution environment ran them faithfully. The system was structurally unsound, not operationally buggy.

That procedure is now a skill: db.migrate-customer-data, version 1.3.2, with explicit dependency validation that prevents execution if logical replication is active. The agent cannot “creatively adapt” the procedure because the procedure is not text to be adapted. It is compiled, validated, versioned code.

What Does Not Work (And Why)

Before arriving at this architecture, we tried several approaches that appeared reasonable but failed in practice:

- Retrieval with structured output. Forcing the LLM to return JSON procedures via carefully crafted prompts. Failed because the LLM can generate valid JSON that does not match the actual tested procedure. Structure without provenance.

- Embedding-based step ranking. Retrieving candidate steps and using embeddings to order them. Failed because step dependencies are logical, not semantic. Step 2 might use completely different vocabulary from Step 1 but depend on it.

- Memory as few-shot examples. Storing past executions as examples for the LLM to generalize from. Failed because generalization is the bug, not the feature. We do not want the agent to generalize from five good migrations. We want it to execute the one correct migration procedure.

Each of these treats the symptom — inaccurate retrieval — while ignoring the disease — procedures should not be retrieved at all. They should be invoked.

How We Think About Memory Now

Our mental model is now explicit: memory has two types with incompatible contracts. When an agent needs context — “what did we decide about the billing integration?” — it uses semantic search. The results are suggestions, weighted by relevance, to be synthesized by the LLM. Partial matches are expected and useful.

When an agent needs to act — “run the weekly billing report” — it uses procedural invocation. The result must be exact, versioned, and deterministic. Partial matches are bugs.

| Dimension | Semantic memory | Procedural memory (skills) |

|---|---|---|

| Query | “What do I know about X?” | “Execute X” |

| Match quality | Cosine similarity, fuzzy | Name + version, exact |

| Result structure | Unordered bag of context | Ordered dependency graph |

| Partial match | Feature (approximate recall) | Bug (procedures are atomic) |

| Lifecycle | Continuous accumulation | Explicit install/uninstall/version |

The Tool Surface in Practice

Agents in our system have 12 skill-related tools available. They are designed to make the two-layer architecture explicit in the agent’s reasoning:

skill-search— find skills by name pattern or trigger, returns metadata onlyskill-describe— get procedure steps for a specific version (read-only)skill-install— add skill to agent’s procedural memoryskill-uninstall— remove skill, breaking existing triggersskill-list-installed— what can this agent execute?skill-execute— run installed skill with dependency validationskill-create— author new skill (requires validation)skill-publish— make skill immutable and available for installskill-fork— create new version from existingskill-deprecate— mark version unsupported (does not break pinned installs)skill-sync-remote— pull skills from configured registriesskill-audit— review execution history, rollbacks

Notice what is missing: there is no skill-retrieve-semantically. The agent cannot “find a skill kind of like X” and execute it. Skills are named and versioned or they do not exist. The LLM can request a skill by name, describe it before installing it, and execute it with confidence. It cannot improvise skills because improvisation is where the bugs live.

Migration Path for Existing Systems

If you are currently storing procedures in your vector store, the migration is not disruptive — it is clarifying. You likely already have text that describes procedures. Keep that in semantic memory; it is useful documentation. Extract the executable core — the ordered steps, the dependencies, the validation rules — into the skill system.

We have found that about 60% of our “procedural” memories were actually documentation — context for humans, not instructions for agents. The remaining 40% became skills, and most shrank significantly once we removed the explanatory text and kept only the executable structure.

The Prediction

The next generation of agent frameworks will ship with two memory primitives by default. The idea that “just use a vector DB” is sufficient for agent memory will look as quaint as “just use a single global variable” looks today — technically possible for simple cases, catastrophically wrong for anything production-grade.

We are already seeing this bifurcation in the ecosystem. Systems that handle serious workloads are growing explicit procedure layers — structured tool calling, OS-level task primitives, our skill system. The pattern is converging because the failure mode is universal. When your agent’s behavior depends on embedding similarity, your agent’s behavior is non-deterministic in ways that matter.

The hard-won lesson: optimize your vector store for what it is good at — flexible, fuzzy, creative recall of context. Build a separate system for what must be exact — named, versioned, deterministic execution of procedure. Your agents will stop forgetting how to do their jobs. More importantly, they will stop “remembering” how to do them wrong.